What Is HappyHorse-1.0?

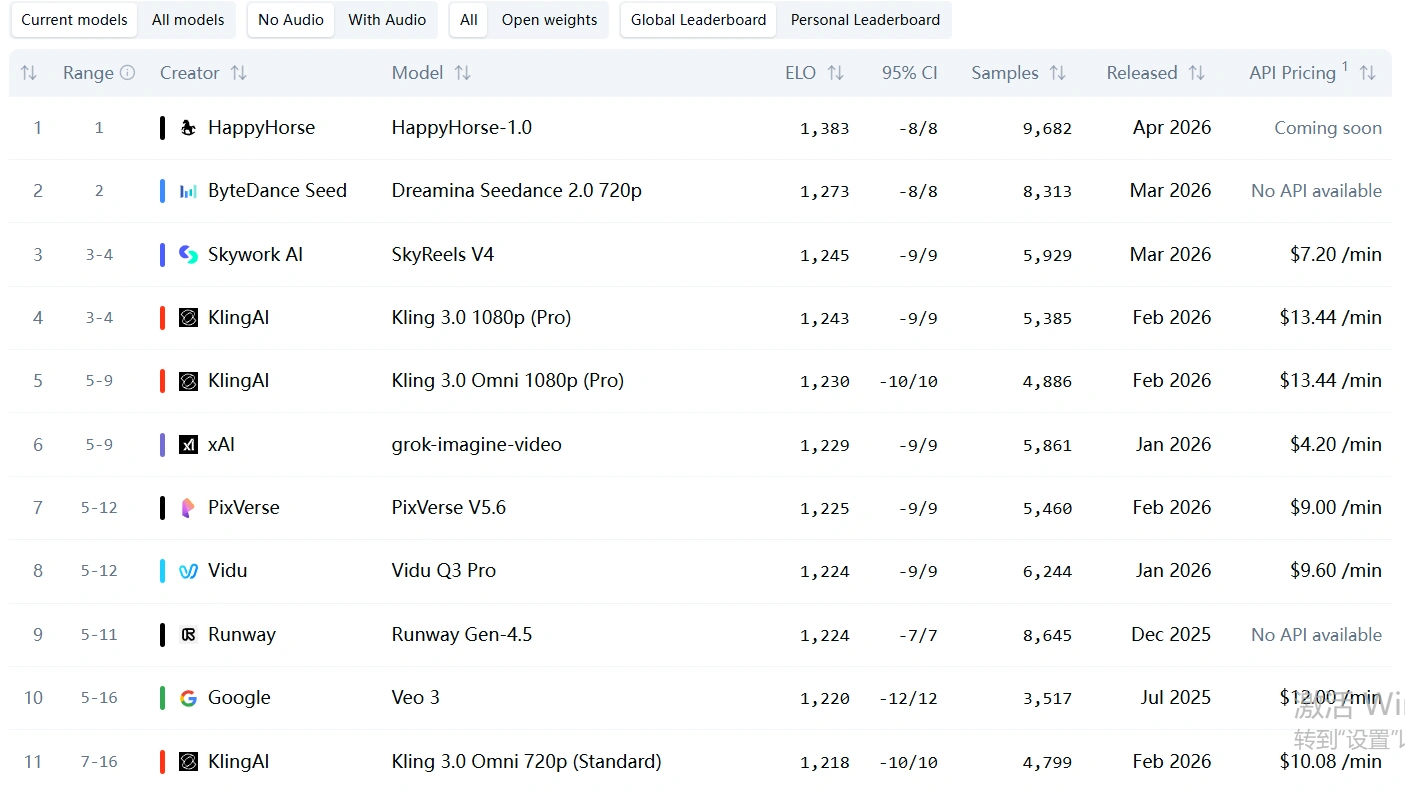

Out of nowhere, HappyHorse-1.0 has taken over the Artificial Analysis leaderboard.

- Image-to-Video: Outperforms all major competitors

- Text-to-Video: Dominates with a significant margin

- ELO Score: 1336 (vs. 1273 for Seedance 2.0)

That 63-point gap is massive—equivalent to the difference between #2 and #15 combined.

In simple terms: this isn’t an upgrade. It’s a leap.

Core Features That Make It Stand Out

1. Native Multimodal Architecture

- ~15B parameter unified Transformer

- Built-in text-to-video, image-to-video, and audio generation

- No stitched pipelines—everything is generated together

Result: Perfect synchronization between visuals, motion, and sound

2. Ultra-Fast Inference (Game Changer)

- 5-second 1080p video: ~38 seconds

- 256p preview: ~2 seconds

Compared to Seedance 2.0:

- Slower rendering

- Higher cost

- Limited accessibility

👉 HappyHorse turns video generation into a real-time creative tool, not a production bottleneck.

3. Advanced Audio-Visual Sync

- Accurate lip-sync

- Context-aware sound effects (footsteps, environment, impact)

- Multi-language support (EN, CN, JP, KR, etc.)

This is where most AI video models fail—and where HappyHorse excels.

The Real Breakthrough: Unrestricted Mode

What Does “Unrestricted” Mean?

Most AI models today come in two versions:

| Version | Description |

|---|---|

| Standard (Safe) | Heavy restrictions, limited creative freedom |

| Unrestricted | Greater flexibility, fewer limitations, broader creative scope |

Why HappyHorse-1.0 Unrestricted Changes Everything

1. True Creative Freedom

- More natural human interactions

- Emotionally expressive scenes

- Cinematic camera control

You’re no longer “generating clips”—you’re directing scenes.

2. Fewer Reference Constraints

Unlike Seedance 2.0:

- Less restrictive on input images

- Better subject consistency

- More usable for real-world workflows

Ideal for:

- Character consistency

- Influencer-style content

- AI advertising

3. Director-Level Control

- Camera movement (pan, dolly, orbit)

- Timing and pacing

- Multi-subject coordination

This is the closest we’ve gotten to AI filmmaking tools.

Real-World Performance Examples

✔ Physics Simulation

Liquid mixing behaves naturally—no visual glitches.

✔ Complex Motion (Basketball)

From release → rim contact → net—perfect continuity.

✔ Immersive Sound Design

Footsteps on snow, ice cracking—fully synchronized.

✔ Style Control

From 90s animation to cyberpunk realism, styles are deeply integrated—not just filters.

Why It’s Beating Seedance 2.0

Seedance 2.0 Limitations:

- Slower generation

- High cost barrier

- Strict restrictions (especially with human references)

HappyHorse Strategy:

- Faster generation

- Unrestricted creativity

- Fully integrated audio-visual pipeline

Result: Massive user migration potential

Who Built It? (Speculation)

There’s no official confirmation yet, but likely candidates include:

- Alibaba (Wan series)

- Google (Veo)

- ByteDance (next-gen models)

- xAI (Grok Imagine)

Industry consensus: Very likely backed by a top-tier Chinese AI lab

Best Use Cases for HappyHorse-1.0 Unrestricted

- Short-form video content (TikTok, Reels)

- AI-generated ads

- Cinematic storytelling

- Character-driven content

- Experimental / edgy creative projects

Soft Integration: How to Actually Use This Today

Here’s the reality:

👉 Models like HappyHorse-1.0 are powerful—but not always accessible, stable, or production-ready for everyday users.

That’s where platforms like Viyou AI come in.

Why Use Viyou AI?

Instead of chasing unstable or invite-only models, Viyou AI gives you:

- ✅ Image-to-Video

- ✅ Text-to-Image + Character Consistency

- ✅ Fast generation without long queues

- ✅ Practical tools for creators (not just demos)

What You Can Do Right Now

- Turn static images into cinematic videos

- Create consistent characters across scenes

- Produce viral-ready short videos

- Experiment with expressive, high-detail prompts

👉 In short:

You don’t need to wait for HappyHorse— you can already build similar workflows today.

Final Verdict

HappyHorse-1.0 Unrestricted isn’t just another model.

It represents a shift toward:

- Faster generation

- Fewer restrictions

- True creative control

👉 The AI video space is no longer about “who generates better clips”

👉 It’s about who gives creators the most freedom